n 2025, global enterprises dumped a conservative estimate of $300 billion into AI infrastructure.

And then what? Walk into the data center of almost any Fortune 500 company today, and you’ll see the exact same absurd diorama: rows of brand-new GPU servers, LEDs frantically blinking, fans screaming, room temperatures optimized to a perfect 72°F—yet running actual business workloads that are less complex than a computer science undergrad's side project.

Welcome to the most ridiculous spectacle in the 2026 tech world: Enterprises bought the Ferrari, parked it in the climate-controlled garage, and are burning expensive fuel every day just to listen to the engine rev. Procurement hit their KPIs, CTOs flashed impressive server rack photos at board meetings, and CEOs proudly announced to Wall Street, "We are fully AI-native." But if you ask what actual commercial profit this astronomical compute has generated? Crickets. Nobody asks, and nobody dares to.

In the industry, these machines have earned a precise nickname: "Cyber-Bonsais." Carefully procured, meticulously housed, and watered monthly with massive electricity bills, their only function is to prove "we have it." They are fundamentally no different from the plastic plant in the boss's office that never blooms.

Compute $\neq$ Capability. Procurement $\neq$ Deployment. Deployment $\neq$ ROI. The bottomless chasm between these three realities is the most expensive "Hallucination Tax" the enterprise world is currently paying.

1. The Invisible "Geek Tax" and the Engineering Abyss

Hardware has a clear price tag. A flagship AI chip costs what it costs; the CFO closes their eyes, signs the PO, and it's done. But the real monster devouring your budget hides on the back of that invoice.

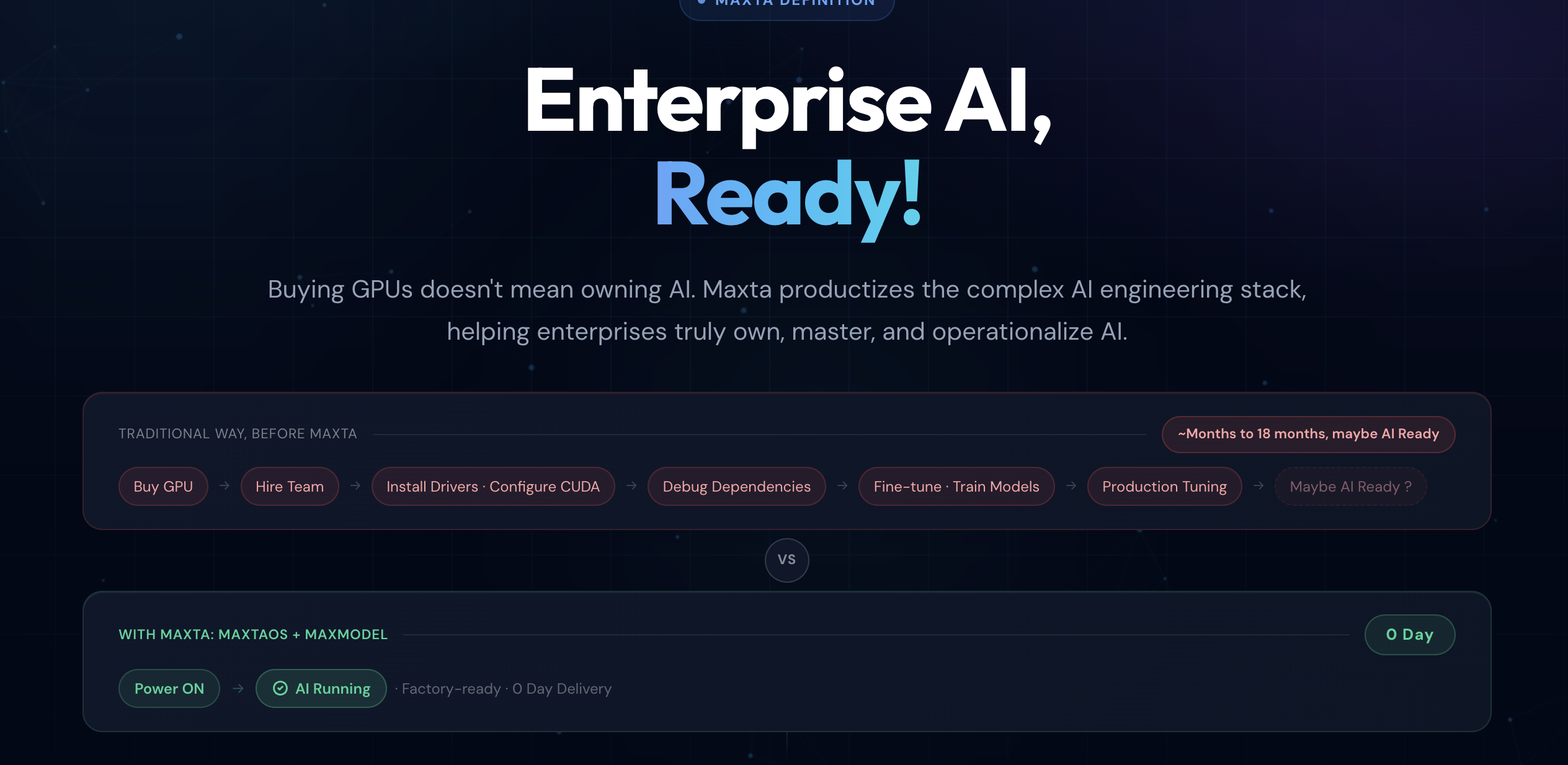

Taking an AI model from a polished demo to a true production environment requires crossing a desperate engineering abyss. Environments must be configured, CUDA versions aligned, containers orchestrated, quantization applied, inference pipelines built, and network isolation protocols handled. Every single step is a black hole for human capital and time.

Let’s do the brutal math: The hardware cost of a top-tier edge inference server might be $200,000. But to make it stably output a single line of valuable data on a factory floor, you need at least two to three Infrastructure engineers making $300k+ annually, spending 3 to 6 months navigating the trenches. The hidden integration cost is often 3x to 5x the hardware price.

This is the lethal "Geek Tax." You thought you were buying a plug-and-play production tool; instead, you bought a one-way ticket to a money pit. Most enterprises face the same grim reality: six months after the hardware arrives, the project is still stuck in "PoC Purgatory," eventually retiring as a beautiful Cyber-Bonsai.

2. Hardware Premiums as a Cover-Up for "Software Laziness"

As veterans with 17 years deep in the infrastructure trenches, we must burst a bubble everyone in the industry is silently ignoring.

Take edge inference as an example. What is the real performance gap between a $500 high-end terminal and a $150 standard x86 mini-PC running the exact same quantized 7B parameter model? After extreme, kernel-level software optimization, the answer makes a lot of people break into a sweat: The gap is negligible, and can even be entirely erased.

So what does that extra $350 buy you? Better chip packaging? Sure. But the more honest answer is: You bought the privilege of not having to stubbornly optimize your underlying software.

The hardware premium is fundamentally a fig leaf for software laziness.

When the operating system's scheduling mechanism is bloated, the inference framework lacks deep tuning, and memory management is a disaster, what is the most brute-force solution? Buy a more expensive machine with more VRAM. It is using financial violence to cover up engineering incompetence. This exposes an industry deformity: the AI hardware supply chain is highly mature, but the underlying AI software stack (the OS) is still in a crude, barbaric era. There are no standards, no reliable abstractions, and every deployment feels like a customized, open-skull surgery.

3. The Arrogance of IT Toys in an OT World

If cloud AI is just burning money, bringing AI to the industrial frontline (the Edge) is a full-blown disaster. IT (Information Technology) and OT (Operational Technology) operate under two entirely different laws of physics.

IT's logic embraces uncertainty: Found a bug? Push a hotfix. Server overload? Auto-scale the cloud. But in OT logic: A production line stops for one second, and you lose tens of thousands. A machine misjudges once, and someone could die. The industrial floor doesn't need "elastic architecture" or "graceful degradation." It demands one thing: Absolute Determinism.

Why has the decades-old PLC (Programmable Logic Controller) ruled factories for half a century? Because it achieved what Silicon Valley elites still cannot: Air-gapped, plug-and-play, 0Day delivery. No network configuration, no command lines.

Now look at the garbage the AI industry is handing to manufacturing: A server requiring constant internet activation, and a pile of Docker containers throwing daily dependency errors. A true industrial edge AI node must be a pure black box: Power on. Ready. Execute. If your device requires an architect to camp on-site for three weeks, you aren't selling a product—you're selling a swamp that can never be delivered.

4. Breaking the Hallucination

The biggest tragedy of enterprise AI spending in 2026 isn't that companies didn't spend enough; it's that they spent it on the wrong things. Enterprises are paying for the "deployment process" instead of the "business result."

True AI capability shouldn't be priced by "how many GPUs you have." It should be traded in units of determinism: Can the model serve stably on Day 1? Can it output locally when the network is severed? When a worker hits a button, does it instantly infer?

Stop paying for compute. Stop subsidizing bottomless debugging. Enterprises should pay for only one thing: the absolute certainty that the moment it is plugged in, profit is generated. In the endgame of this compute frenzy, the winner won't be the company hoarding the most GPUs, but the disruptor who flattens the engineering abyss, making large models as "plug-and-play" as electricity and water.